KPIs, Velocity, and Other Destructive Metrics

"It is wrong to suppose that if you can’t measure it, you can’t manage it—a costly myth."

The Deming quote at the top of this post is often twisted into something worthy of Frederick Taylor: "if you can't measure it, you can't manage it." Deming would disagree. You can—in fact, must—manage things you can't measure, because in software, there are virtually no measurements that have any value. Wasting time collecting measurements that don't lead to improvement is not only costly, it's actively destructive.

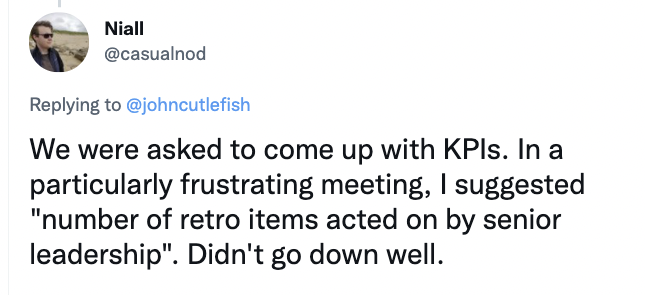

KPI (Key Performance Indicator) metrics and Agile just don't mix. They are the software version of Taylorism. One of my LinkedIn compatriots (Steve Gordon) said it pretty well: "The only reason for KPIs is if you do not trust your developers to deliver working software to the best of their ability and continuously learn to do it better." Trust and respect are central to agility.

KPI thinking, though, is central to the way many shops work, even so-called Agile ones. They take the so-called Chrysler management model as their guide. Chrysler collected lots of data into a central place, chewed on it, then spit out directives forcing production changes that they hoped would improve the data. By the time this process went full circle, however, the actual reality on the factory floor had changed. Since the management decisions were based on obsolete data, the resulting changes ranged from ineffective to destructive. The data crunching and the real world of the production floor were far separated, both physically and in time.

Toyota, from which Lean thinking emerged, had a different idea: Focus on continuous process improvement, and productivity takes care of itself. The people doing the work are responsible for the improvements. If anybody measures anything, it's the actual workers, who act on those measurements immediately. Like all agile thinking, short feedback loops and immediate change made for effectivity. Toyota believes that the most important factor in productivity is a pervasive continuous-improvement culture, and I agree strongly. (For more on that, check out Mike Rother's Toyota Kata).

So, are metrics never useful? Of course not, provided that the metrics are based on real things and are actionable. Whether you can use them to measure "performance" is another matter. Even defining "performance" in a software context is difficult to impossible. We recognize it when we see it, but we can't really measure it.

For example, velocity (average points per sprint) is not a performance metric. If anything at all, it’s a measure of output that tells you nothing about the value of whatever you’re producing, and it’s not even a good measure of output. For one thing, the basic unit (a point) is not a measurable quantity. It's a judgment. You can't derive a quantitative measure from qualitative input.

More importantly, velocity is a gauge, not a control (to quote my friend Tim Ottinger). You observe the amount of work you actually complete in a sprint. The average of that number is your velocity. It changes all the time. If the work you complete is always below your velocity, that tells me that you're making up a number rather than using observations.

Individual velocity as a KPI is particularly abhorrent. (The fact that Jira provides this metric is a good reason to get rid of Jira. It's just pandering to dysfunction and promoting micro-mismanagement.) You estimate a story at 2 points based on incomplete information—and all estimates in the Agile world are based on incomplete information. You discover something as you're programming, and as a result spend all week working on that story. You do a spectacular job. You are nonetheless reprimanded by a Jira-obsessed manager because your velocity is so low. That's just abuse, plain and simple.

Then there’s the Goldratt factor. Elihu Goldratt said: “Tell me how you measure me, and I will tell you how I will behave.” Given that a point is an arbitrary number, I can make my velocity whatever I want. You need to see 200 points/sprint for me to get that bonus. Sure! Whatever you want! I can push out vast quantites of low value easy stories that nobody wants if that’s what I need to do to get that bounus!

Similarly, meeting your estimates tells me nothing except that you can create estimates that you can meet. I’ll happily multiply my guesses by 100 if that’s what it takes! Using velocity as a KPI really says: "we don't care how good your work is or how valuable you are to the company—all that matters is how well you can estimate."

As I said, destructive.

Velocity is equally useless as a KPI at the team level. First, every team has a different notion of what a "point" is. Comparing velocity between teams is like saying that basketball teams are "more productive" than baseball teams because they rack up more points during the game. Velocity is also a moving target. It's something that you measure so that you can guess what you can accomplish in the next sprint (and it's not even a particularly good metric for that). It's an average: the number is adjusted after every sprint. And there's nothing actionable about velocity—it tells you nothing about how to improve.

Another problem with velocity as a KPI (or any KPI applied to a team as compared to the entire organization) is that it gets you focused on local optimization. It's the speed of the entire organization that matters—the time it takes for an idea that's a gleam in somebody's eye to get into your customer's hands. An individual team's pace within that system is usually irrelevant. Imagine three hikers walking along a narrow trail. If the people at the two ends are walking at a fixed pace, it doesn't matter how fast the guy in the middle goes. Eventually, he'll catch up with the guy in front. What matters, however, is the speed at which the last hiker is walking. The product doesn't ship until he arrives. As long as the work is inside the production system, it's a liability—it costs you money and doesn't make a profit. Working on too many things is just wasting money, in the same way that our middle hiker bouncing around in the space available to him is wasting energy. It's better to work/walk at the right pace. Measuring the velocity of a single team is like measuring the speed of that middle hiker. It doesn't tell you anything useful. (The hiker analogy comes from Eliyahu Goldratt's The Goal, by the way. If you haven't, read it.)

Even if local optimization did work, you typically cannot "improve" velocity locally because many of the impediments are institutional. Pushing a team to improve when the means to do that is out of their control is another form of abuse. And even if your team does improve its processes dramatically, the velocity will remain unchanged because the definition of a "point" will necessarily shift. You can do more in one point than you used to, but it's still one point.

Here are some examples of other worthless metrics that are often treated as KPIs. A real KPI measures outcomes. None of these do. (Feel free to add more in the comments):

- anything that compares what you deliver against an up-front specification. Specifications change. They change because both we and our customers learn based on what we deliver. We never deliver what we think we will up front.

- anything to do with tasks. We don't plan tasks. We plan stories and the developers figure out the tasks. The list of tasks changes as you work.

- anything to do with milestones. You can't set milestones without that soon-to-be obsolete up-front plan.

- anything to do with commitments. The notion of a "Sprint commitment" was removed from the Scrum Guide years ago because it was so destructive. The ratio of the number of stories you thought you'd deliver to the number you actually delivered is just measuring how much you learned during development. The things you learned rendered your estimates incorrect. So what? The more discrepency the better! Agile organizations are learning organizations.

- anything to do with estimates. Metrics focused on estimates do nothing but measure your ability to estimate (or fudge an estimate). They don't measure work.

- variation from a schedule. Again, long term schedules require up-front plans. We do not do fixed-scope work, in a specific time, set by up-front guesswork, formed from an inaccurate plan.

- defect density. If this number is > 0, you're in deep trouble. There should be nothing to measure. Do TDD.

- the amount of code or number of features (including function-point analysis). We deliver value, not features. We want to write the least code possible (with the fewest features) that provides the most value. Feature counts are irrelevant if we haven't delivered value. If we could measure it, one of the best metrics would be the amount of code we didn't write.

- ROI. It's too fuzzy a number, often based on wishful thinking.

Collecting a metric that doesn't show you how to improve is waste.

So, what internal performance-related things might be worth measuring? (None of these are KPIs, either, but please add any real agile KPIs—measured outcomes in an Agile organization that track actual performance—to the comments if you know of any):

- the number of improvements you've made to your process over time. Make this into a game that encourages everybody to improve continuously. Every time you make an improvement, tack a descriptive sticky note to the wall. Everybody uses a different color, and whoever has the most notes wins!

- the number of tests you write before you code

- the number of experiments you've performed

- the number of process and institutional changes instigated by those experiments

- the number of things you've learned in the past month

- the number of validated business-level hypotheses you've developed

- the number of times a week you talk to an actual customer

- the number of changes to your backlog (if it's not changing, why not?)

- the ratio of implemented to non-implemented customer-driven changes requested mid-iteration (Why are you not changing the plan when the need arises?)

- the time that elapses between learning that you need some training and actually getting it. This metric applies to any physical resource as well, of course. Training seems particularly indicative of problems, however, because it's discounted by people who don't "get" agile.

- the stability of your teams

- the employee turnover rate

- team hapiness/satisfaction levels I'm reluctant to add this one because I don't know how to measure it directly. Turnover rate is related, of course, but we'd like to catch problems before people start quitting. I don't believe in the accuracy of surveys. Maybe you can add some ideas to the comments.

Look at the quality of your continuous-improvement culture, not at time. In the earlier hiker analogy, the only way to become more productive is for everybody to move faster. The only way to do that is to have a continuous-improvement culture that infuses the entire organization. Focus on improvement, and productivity takes care of itself.

In addition to organization-level metrics, Lean metrics like throughput, cycle time, etc., can be worthwhile to the teams because they can identify areas for improvement. I'm a #NoEstimates guy, so I keep a rolling average of the number of stories completed per week so that I can make business projections.

The most useful production metric is the rate at which you get valuable software into your customer's hands. That's central to Agile thinking: "Working software is the primary measure of progress." You could simplify that notion to the number of stories you deliver to your customer every week. If you don't deliver, you've accomplished nothing. Also note that word, valuable. The rate of delivery is meaningless if the software isn’t valuable to the users.

And that notion brings us to the final and only performance metric that really matters: customer satisfaction, the only true indicator of value. That's a notoriously hard thing to measure, but there are indirect indicators. The easiest one is profit. If people are buying your product, then you've built something worthwhile. There are dicy metrics, too. NPS (Net Promoter Score)—would you recommend our product to your friends—falls into that category. No, I will not recommend your production monitoring system to my friends—the subject just doesn’t come up in normal conversation. Of course, you could (gasp) actually talk to your customers. Host a convention. Sponsor user groups and go to the meetings. Embed yourself in a corporate client for a few days.

A organization that's focusing on KPIs typically does none of this, and in fact, the whole notion of a KPI flies in the face of agile thinking. It's management's job to facilitate the work. The teams decide how the work is done, and how to improve it. You don't need KPIs for that, and I believe Deming would say that their absence doesn't matter because the teams can effectively manage the work without them.

Another useless KPI: Amount of releases in a period of time

Releasing is typically potential value, not realized value. Measuring this gives a false sense of value obtained. Once the delivery is measured, realized value (or lack thereof) is observed and action is taken.

Another worth measuring: Probability outcomes for throughput

This kind of piggy backs off the “improvement items made to your process over time”, but is still essential to separate. Daniel Vacanti is big on showing the throughput probability based on previous flow and cycle time. For example, “I am 50% confident we can delivery 25 items within the next 30 days”. How much risk is acceptable given the delivery and system you work in? If you want less risk, but higher throughput, you need to optimize the flow.

Dear Allen,

first of all thanks for a brilliant summary so much resonating with me. Pretending agility while keeping traditional management KPI mentality is a real killer.

As for your point rg. team happiness: easy practice is a “mood marbles”. If the team is collocated, you just need 2 jars and batch of green/red marbles. And ask team members to put a marble of their mood (red=”bad day”, green=”good day”) at the end of each day. And scrum master noting result of each day. It can give you alarms and/or trends.

For remote teams there are free tools on the web. Eventually it is not too difficult to program happy/sad face tool in house.

Cheers and thanks again for excellent reading.

Martin

“Focus on continuous process improvement, and productivity takes care of itself.” Love it. And so on point.

RE: team happiness/satisfaction levels

I’ve collected a toolbox of ways to visualize team health and wellbeing, the one metric that really matters, the one metric that is a leading indicator of just about everything important:

https://medium.com/@justsitthere/team-health-morale-checks-ed534816e53

Happiness is not the goal, resilience is. Is view these visualization tools as “wellness checks”.. catch the high cholesterol well before the hearth attack.

Very good read…thanks a bunch for this.

I’d love to be a contractor working at your house… yeah…. about that water leak… I’ll be done when I’m done – and the more I think about it, I might not even fix your problem. Let’s experiment and see! The important part is that I’m talking to you on a regular basis (don’t mind that big bill). I’m not entirely opposed to many of the view points in the article, but some of the “worthless” KPIs do in reality drive important conversations. Velocity is a long term forecast indicator and for a given team can do a decent job at generally understanding if a decomped project is deliverable in a given time frame. Understanding that there is a margin of error is key. The Sales dept needs to know when they will be selling something (within reason). Basic human nature frequently makes given work expand into given time… and milestones (short term goals) can load balance project pressure and determine appropriate solutions for issues. At the end of the day it’s called work, not an exercise in self improvement pleasure. I do like (and use) the joy poll. Mood marbles might be a bit much.

Hi Pat, That’s the usual critique that people who don’t understand Agile make towards Agile processes. Steve McConnell being the most well known. You can watch a video of Steve’s critique of #NoEstimates here, and Ron Jefferies (one of the founders of XP) has a good rebuttal here along with a great critique of estimation in general in his article Estimation is Evil.

The main flaw in your metaphor is that we’re not building houses, so comparisons with the building trades really don’t hold water. Software developers are inventing stuff, not using off-the shelf parts to solve well understood problems. Moreover, they’re doing that with very inaccurate and incomplete specifications. It’s as if you were building a house, but you had to invent plumbing to do it, and your customer didn’t really know what they wanted. Having a fixed scope and a fixed delivery date really doesn’t work in that world, and in any event, that thinking has failed in most waterfall projects for half a century. There are plenty of Agile companies (in fact, by some measures, 80% of companies are Agile, though you should probably cut that number in half to be realistic), so your critique doesn’t really hold water. The stuff really does work in the real world.

Of course, many Agile projects have fixed delivery dates. Usually, you solve that problem by varying scope based on projections, which are themselves based on hard measurements. That approach was first proposed by David Anderson in a Kanban context almost 20 years ago, and has been used at many successful companies since then (including Microsoft). It’s been expanded recently by the #NoEstimates movement. You can watch my short introduction to #NoEstimates at here.

This is such a great comment. People forget that as software engineers we most of the time are just pasting together known things but are often needing to invent solutions. The impedence mismatch of this reality to management is the main cause of engineers losing ground in almost any organization. I would like to work for you!

Meant to say we are NOT just pasting together solutions, but rather often times need to creatively craft new solutions for which there is no established pattern or playbook. This is less like construction and more like invention as you mention, and invention is an artform.

> Software developers are inventing stuff, not using off-the shelf parts to solve well understood problems.

In many cases that’s exactly what software developers do though..

And in many more cases, they don’t. What’s your point, exactly? That there are exceptions that are awful places to work, based on a surveillance culture and violent management? Sure. They exist. They’re pretty much beyond saving, though.

Totally agree with Allen. Building a house is a poor metaphor for software development.

As I wrote elsewhere, KPIs are for losers. I suggest you read Scott Adams’s “How to Fail at Almost Everything and Still Win Big” in which he explains why goals are for losers, systems are for winners.

Agile is a system in which, by design, you maximize the throughput of value produced by the team. That means, that you could not produce more value with the capacity at hand. That’s it, you don’t need a KPI. you just need a system to increase value productivity.This system is known as continuous improvement.

Also I suggest that you re-read the (agile) prime directive: “Regardless of what we discover, we understand and truly believe that everyone did the best job they could, given what they knew at the time, their skills and abilities, the resources available, and the situation at hand.” It tells why KPIs are useless.

Allen – I like where you’re going and feel like there has been push back on estimation concepts for years based on their use as performance measures not as a planning tool or capacity tool. Measuring in Agile is a religious hot-button and I often feel as if there is no one answer for everyone. it depends on the company, products, current culture, desired outcomes, etc.

In many of the great points you make, I see an organization who is well along in the more basic and fundamental “agile” concepts such as great collaboration, experimentation, TDD, etc. The problem Allen, is that they have to start somewhere and its usually from a very dark place. You describe a mature agile environment while most of whom we are trying to help are not even close to that point. In turn, we need to take baby steps and, over time and with much pain, get them to the state you describe above.

Meanwhile, we need to feed milk before T-bones. We need to take a more pragmatic approach that doesn’t tear the spine out of a traditionally run organization, and let them mature over time into one that has the type of trust, adaptability, experimentation and continuous learning we all hope they can get to. What I would love to see is an article taking the slower learning curves and maturity states into consideration as opposed to saying we should all be this “one way” in order to declare success. Its simply not practical; almost irresponsible.

With due respect, I also highly disagree with the suggested performance measures you outline in terms of counting number. The measurements you describe above almost all relate to quantity. I can absolutely game just about every one to make me and my team look and feel better about itself. You touch on outcomes as opposed to quantities…maybe should have stuck with that.

Thanks though Allen…thought provoking stuff here.

Robert. Disagree! Heavens! :-). Actually, I disagree with using these as “performance” metrics. They aren’t. They’re ways to measure how far along your team is on its path to agility. Since they aren’t measuring performance, game-ability isn’t really an issue because there’s no real benefit to gaming them. They’re there so that you can judge your own progress.

I have found it interesting that many note that KPIs are destructive, and that one needs to simply maximize value. But how does one measure value? Don’t you have to measure value? Isn’t value your highest level KPI? To think all is good as long as the team is happy and the customer is happy, is to assume that your producing something of value.

It seems to me that if you are selling software, you have a number of metrics, including sales revenue, profit, market share, etc that constitute to the value you are producing. Sure, existing customers may be happy, but if your competitors are growing market share at your expense, you aren’t producing (enough) value fast enough. You may need to add resources/teams, or better prioritize stories, or increase the rate of your continuous process improvement.

If you are producing software for internal consumption, the measure of value may be different, but still needs to exist. Are internal departments improving productivity due to your delivered software? Have they reduced staff? Maintained staff as business grew? Cut other expenses? Improved/increased output by some measure?

Without a measure of value, how would a team (and management) know they are “done”, and that there are other projects/products to invest in that produce greater value?

@George: Thank you for your enlightening comment.

Actually, one cannot measure value. That’s the usual illusion of ROI (Return On Investment). There’s no such thing as ROI. There’s only ROI after 1 year, which is different from ROI after 2 years, which is also different from ROI after 3 years. Value is a cumulative quantity, so if you really want to measure it, you have to take time. There may be exceptions, like movies, in which most of the income is made during the first month. But in my experience, the time scale is often several months or years.

To be precise, value-driven management would be better named “expected value vs. expected cost”-driven management. Each user story is the expression of several bets in a moving context. A bet for the value it may bring in the future. A bet for the cost it will need to be delivered. And a bet for the cost of operations once delivered.

We can measure income/revenue, we can measure costs: it will tell us how good we are at making bets. However, we will know months after the delivery, that is far too late for feedback loop.

Also, in my experience, the ones that want KPIs, want to assess the performance of the team, not performance of the product. They are scared of assessing the product performance as they could be considered as accountable. They only want to know if the team works hard enough. They want to confirm that this bunch of little suckers called developers is stealing their money. No wonders that teams are reluctant to KPIs: it’s a fool’s game.

I agree that if you are only concerned with applying a thumb to developers, then KPIs are a fool’s game. And macro measures like sales and profit are not immediate, not actionable iteration to iteration, and not a measure of productivity.

However, as a measure of effectiveness (vs. efficiency) we may just disagree with the time frame for evaluation of value. Certainly, this will differ depending on industry, but In today’s environment, 2-3 year valuation is immaterial. In 2-3 years, we’ve often moved on to new software/new products. If you haven’t quantitatively realized value in months/quarters due to your incremental, iterative functionality, you are likely not going to see it. If you’re in front, the competition has likely matched you in 6-12 months. Your effort is no longer a competitive advantage or a reason for consumers to spend money. It’s table stakes.

Can value be = customer satisfaction then? If we can’t measure it tangibly, would “Yes, I am satisfied” or ” I am satisfied on 10 our of 10″ count, too? Value surely has emotional part, too, customers are humans. They have their expectations and meeting them can to my mind count as providing value.

Very good points made here by Allen Holub.

In many ways the current Agile movement does not produce quality. Because of its Time boxing approach (a poor form of wrong KPI’s) and the associated culture, the quality is usually low, resulting in high cost and waste of time.

Approaching agile thinking based on the ideas of Japanese quality management (Toyota / Dr. E. Demming) and using views from Goldratt (Theory of Constraints) are necessary to repair fundamental flaws in the current Agile movement.

That measure/manage quote is attributed to Drucker, btw:

“If you can’t measure it, you can’t manage it.”

Lean startup espouses the use of leading indicators as proxies for outcomes, which allows for metrics that are useful for assessing value or value potential created by a team. It may take a long time to measure actual revenue impact, but for example the number of conversions from a free 30 day trial to paying member, or number of referrals generated by early adopters of a new product are examples of good quantitative metrics that shed light on value but avoid the pitfalls of output KPI’s, which are subject to the problems described above. (loosely paraphrasing “The Startup Way”)

Fantastic, great disussion and so many valid points in one place – thank you!

Alen,

Thanks for sharing your perspectives – really good stuff 🙂

Regarding this topic: how do you see OKR as a system to create a shared understanding and keep the business focus on the most important stuff?

Regards,

Sharon

Sharon, My main problem with OKR is that it implies a hierarchical organization where somebody in a “management” role is imposing goals based on quarterly planning. That’s about as waterfall as you can get. Your planning should be much more dynamic than that, should be happening continuously, and should be done collaboratively, by command/control “leadership.” A more Agile equivalent would be something like Mike Rother’s “Improvement Kata” (described in the book Toyota Kata. The best way to keep business focus is for “Business people and developers [to] work together daily throughout the project.” When the Agile Manifesto says “daily,” it means “daily.”

Hey Allen! Great read…

One of my responsibilities is to work with other internal teams on agile practices (agreed on not using velocity or those individual KPIs for performance metrics), which have lead us to tracking to some metrics and would love your take on it.

Sprint Commitment – Several teams deal with a high volume of work added mid-sprint that has nothing to do with the sprint plan or their feature development and still gets handled by the team (lots of unplanned cross-team dependencies & blockers). Until we can improve that, seeing what teams consistently plan more work than feasible and encouraging them to plan an achievable amount of work seems like a way sprint commitment can be used effectively.

Defect Density, combined with Rework Rate (how much work in the sprint keeps getting kicked back from a code review/QA check): This is not a KPI, but I believe it to be a good metric and would provide guidance to a team. A team with low rework and high defects may need to slow down and implement some practices (TDD?). A team with high rework and high defects may be focusing on quality for the first time (finding more bugs, kicking more throwaway code back before it hits production).

I would really like your thoughts on this. Thank you!

Thanks, Anthony. Frankly, I don’t think either of those are useful metrics. First, there is no such thing as a “sprint commitment.” In Scrum, you adjust the work dynamically as the sprint progresses and you learn more about what you’re doing. That’s one of the reason’s that it’s critical for the PO to be easily accessible throughout the entire sprint. There is absolutely no requirement to “Plan an achievable amount of work.” Instead, make a good guess, and then adjust the scope of the work as the sprint progresses. I’d add that things will be way better if, instead of trying to guess what “size” a story is, you instead narrow all stories down to 1 or 2 days (forget about points, they’re worthless and also are not part of Scrum) in your initial sprint planning. Then implement them in value order. If you don’t get a relatively low value 1-day story done, it’s not big deal. If you haven’t recently, I’d suggest that you spend some time with the Scrum Guide to see how Scrum is supposed to work :-).

That brings me to an actual metric that’s worth tracking: throughput (the average number of stories that you complete in a sprint). If you also track your “split rate” (the average number of 1-2 day stories that you split out of a larger one on the backlog), you can make pretty accurate projections about the rate that you’ll be going through the backlog.

Defect density is another worthless metric. The only acceptable known-bug count at the end of a sprint is zero. If you have any known bugs, you’re not ‘done’ and your sprint is not over. You’ll find that teams that take quality this seriously work faster than teams that ship buggy code. It’s just easier to work on code if it’s bug free. TDD, in fact, is an essential practice that you should always do because it speeds up the rate of work. If you’re not doing TDD, you’re deliberately cutting your productivity by as significant amount. Similarly, you should have no “throwaway code.” Write exactly what you need to satisfy the “done” criteria of your story. Any defect density >0 is a serious process defect that you need to correct immediately. If a team has “high rework and high defects,” it needs to completely rethink the way that it’s working. If this situation exists because the team was pressured to “deliver,” then you are not working in an Agile organization, and you need to work on that.

Thank you for replying Allen, and I appreciate the feedback!

You raised great points. To your comment, we’re not yet an agile organization; we’re on the journey to get there with a lot of work ahead. Suffice it to say our roles and practices don’t yet match up as well as we’d like to scrum.

You’re right, there is no longer a sprint commitment in the guide, I should have said forecast. What we encounter is that many teams sprints are derailed by other teams’ dependencies, making the forecast minimally related to the work done, which causes impacts throughout the org. We are working with teams, doing deep dives and changing practices to address this. But as that happens if the majority of a team’s work is often not related to the Sprint goal (or what the team discussed/planned/forecast) we felt that the metric/insight is helpful in identifying trends/causes and where to start.

Throughput seems related to cycle time, and makes sense. I’ve never heard of split rate, and like the concept and will do some digging. I’ve encouraged similar sizing and breaking down large stories into manageable pieces before, but didn’t specify what that size looked like.

For defect density, I wasn’t clear in my previous comment. I mean bugs in the wild vs rework within a sprint (seeing that review/testing inside the sprint results in less production bugs after the sprint). Teams and the engineering org are implementing better quality practices. I agree fully about bugs found in the sprint getting fixed before deploy, but for those that are found after launch, providing info around this would be useful in seeing where to focus help first.

Thank you again for your insights!

Actually this comment would be a great addition to the article itself.

How do we know if we’re becoming a better scrum team? We could use measures that, taken together, could indicate whether we are improving or not.

Some suggested measures: Lead time, Team motivation, Customer satisfaction, Software quality, Software maintainability.

Taken in isolation and used by managers to drive performance, these measures will almost certainly lead to unintended and negative consequences. But taken together, and used by the team, they can help indicate whether improvement experiments have been successful or not.

Brilliant and a bold article for the community.

Yes, over the period of time velocity and estimations are more like the charms in the share or equity market ! (Volatile at times)

Building a software is more of an art with the taste of tech! (Yes Tech is an important factor – tech can be measured, but how do you measure art ?)

But, Kpi and metrics based measurement are more theoretical management (and we have also been educated in the same way (marks rank grade class first), most of us) which is the base for most business model valuations.

This article give some good ways for team performance and satisfaction realisation (like number of discussions with PO, new experiments tried in the sprint..etc)

But we’re we really satisfied with the grades and marks we obtained? – a point to think !!

Good morning guys !! :joy:

Hello Allen, let me just provide a friendly challenge here 🙂

Even if these are not KPI for measuring the performance of a team, I make the assumption here that we want to measure and follow this metric and make sure it goes up and stays high.

1. “the number of improvements you’ve made to your process over time.”

– Well, that doesn’t tell me how to improve, it just measures how good you are at changing things around. But are the changes for the better or worse? Who knows. One time its 2 changes, then its 5, then its 1. So what, what does that tell me?

2. “the number of tests you write before you code”

– Well, that doesn’t tell me how much value you deliver, it just encourages to overspend our time on tests and provides an excuse not to ship value, e.g. when a single or two tests would have more the sufficed.

3. “the number of experiments you’ve performed”

– Well, that doesn’t tell me how much value you deliver or how to get better, it only shows how good you at the process of tinkering. Are all experiments created equal? Is each experiment always worth “1x value unit”? Will 3 experiments always be better than 1 experiment, regardless of how well or poorly they are designed?

4. “the number of process and institutional changes instigated by those experiments”

– same as #1

5. “the number of things you’ve learned in the past month”

– Ok, sounds good, but the same challenge as for #3. i.e. Is every instance of learning equal in its weight and value?

6. “the number of validated business-level hypotheses you’ve developed”

– same as Velocity points, just less informative, because its just a number of items. What if there is 1 initiative with high difficulty level, but a tremendous value (as it turned out) delivered vs 3 initiatives developed that each turned out to be meh, but at least we have 3 learnings. Ok, who is to say if one is more valuable than the other?

7. the number of times a week you talk to an actual customer

– … encourages arbitrary meetings, or worse yet – quotas, instead of shipping.

8. the number of changes to your backlog (if it’s not changing, why not?)

– … encourages arbitrary changes, doesn’t tell me if value is delivered. – should be change more, change less? Doesnt inform.

Without going through the whole list, the point here, I agree, there cannot be a silver bullet measurement or KPI, no matter how pure or “agile” in the heart it is. Every single metric could be twisted, hacked and inflated if your reward depends on it.

My guess is that a true KPI will be something akin to string theory. A model far too complex for our current ability to quantify it.

Or perhaps a KPI of a team is a non-starter, just like trying to measure the performance of a single knight or pawn in a chess game.

Thanks for the thought-provoking article! I’ve enjoyed thinking about it.

Hi Miks,

The thread running through your challenge is on the mark, I think. We need to be focusing on delivering value. I wish I had a way to measure value, but I don’t 🙂

While individually these metrics don’t measure value, the collection of them can indicate how delivering value is enabled. Looking at how many improvements made relies on trust in the team that they make an improvement and then improve upon that, which may be going back to the old way. Counting tests doesn’t deliver value but, to a point, more test cases leads to better coverage, leads to better quality, leads to more efficiency, leads to more value per dollar spent (or less maintenance cost). Layering on test coverage would be valuable. More on testing, I want to encourage (measure?) development of test scenarios and test cases, not just tests. Now that enables better test coverage.

The other suggested or brain-stormed metrics follow the same line. Individually, they don’t measure value but together, when trending “upward” will lead to more value.

Yes, but. First, even the Accelerate metrics are easy to abuse, particularly if the focus is on “productivity” or output instead of value delivery. If you use them, you have to be very careful. Ideally, they won’t escape the team. Counting tests tell you virtually nothing from a value perspective. Neither does code coverage. You can have massive numbers of tests that cover 100% of the code and still not know if the code does anything useful for an actual user or implements valid business rules or processes. “Value” is not some code word for “well tested.” It means that your customers actually find the software useful, that it makes their lives better in some way. Your notion of determining “value per dollar spent” from any metric is simply not workable, and there is zero connection between testing and value. Tests do not answer the question “does this code make your life better.” That’s value.

Hello Allen, thanks for a fascinating article and the comments it generated. I appreciate that you question some of the agile management practices. I have worked in software businesses using agile but am not an engineer or development leader and would like to better understand how development organizations’ performance can appropriately be measured or assessed.

I’ve had discussions with development leaders about how to evaluate team and overall development organization performance and it’s a tough question. The responses varied but usually included comments about velocity, burn down, defects/rework and “over time we can sense if a team is delivering well.” I agree with your views on velocity. Seems like good velocity performance using points is not much different than planning to work 40 hours and then actually working 40. It doesn’t say anything about the quantity, quality or timing of the output & results that the company and its customers are depending on.

I also like your reference to Goldratt’s hikers – the delivery of the whole team is most important. In a software business the development organization represents much, but certainly not all, of the engine needed to produce, enhance and maintain quality products. What indicators would tell us whether the development organization is the first, second or third hiker? And if the third hiker, how far behind the first and second hiker?

Another couple ways to consider the question:

What performance indicators or other information would a candidate for a development leader position use to assess the performance of the organization he or she would lead?

What development team goals or performance indicator targets might a VP of development set with the CEO that would make the CEO comfortable that the company would be able to deliver on its plans and commitments?

I’d appreciate your thoughts on these questions

Thanks Allen! A very provocative and insightful article (as usual). I’d like to be provocative too and challenge a bit your way of thinking. If the ultimate goal is delivering value, could you imagine following situations:

– teams perform lots of experiments, learn lots of things, but provide low value

– teams call customer a lot during the week because there is a problem. If everything is smooth, they see the customer only at specific scheduled meetings

– backlog changes a lot, because customer’s requirements change all the time (this is driving the team crazy and happiness goes down)

– the ratio of implemented to non-implemented customer-driven changes requested mid-iteration -> what if these changes are unrelated to the functionality in the iteration and could be done in the next iteration with a little bit more of upfront thinking. otherwise it will contribute to the loss of focus and will be a distraction

– the time that elapses between learning that you need some training and actually getting it – what if you are constantly getting the training right away because you simply don’t produce value and spend majority of your time learning?

– team is happy, but incredibly slow, because they don’t have focus on the value, but rather on the individual learning, they write beautiful code which takes ages

From a developer perspective the article makes total sense! If we are customer-oriented, we should think about metrics that will make sense and provide value to the customer. All customers want a high quality product made at the shortest time possible. They will be able to assess the quality after the delivery. So the first question they always ask is related to the time “When will it be done?” So that they can plan further integrations, commercial campaigns, etc. Our chosen metrics should also reflect this customer need. I think you have the quality metrics covered well:)

Great article Allen! I see a lot of passion in the comments.

I think it’s important to remember a couple of things.

1. Software is a means, not an end.

2. The customer is the arbiter of value.

Excelent article it explains a lot of what I am preaching in my company, but I failed to structure it like this. Very useful.

In terms of what worked for

team hapiness/satisfaction levels.

I conduct anonimzed sprint happines surveys with microsoft forms, that i track over time.

But also I am using Retrium as a remote retrospective tools and it has a very nice feature called radar.

Again this is anonymous data, but it surfaces the problems early so that we can talk about them.

I picked a few radars to track over time, I am rotating them, or I pick one when I observe that during the sprint the team encountered some relevant issues.